What is Azure Storage?

Azure Storage is a cloud-based service that provides scalable, secure and highly available data storage solutions for applications running in the cloud. It offers different types of storage options like Blob storage, Queue storage, Table storage and File storage.

Blob storage is used to store unstructured data like images, videos, audios and documents while Queue storage helps in building scalable applications with loosely coupled architecture. Table storage is a NoSQL key-value store used for storing structured datasets and File share manages files in the same way as traditional file servers.

Azure Storage provides developers with a massively scalable object store for text and binary data hosting that can be accessed via REST API or by using various client libraries in languages like .NET, Java and Python. It also offers features like geo-replication, redundancy options and backup policies which provide high availability of data across regions.

The Importance of Implementing Best Practices

Implementing best practices when using Azure Storage can save you from many problems down the road. For instance, security breaches or performance issues can lead to downtime or loss of important data which could have severe consequences on your organization’s reputation or revenue.

By following best practices guidelines provided by Microsoft or other industry leaders you can ensure improved security, better performance and cost savings. Each type of Azure Storage has its own unique characteristics that may require specific best practices to be followed to achieve optimal results.

Therefore it’s essential to understand the type of data being stored and usage patterns before designing the storage solution architecture. In this article we’ll explore some best practices for securing your Azure Storage account against unauthorized access attempts as well as optimizing its performance based on your needs while also ensuring high-availability through replication options and disaster recovery strategies.

Security Best Practices

Use of Access Keys and Shared Access Signatures (SAS)

The use of access keys and shared access signatures (SAS) is a critical aspect of security best practices in Azure Storage. Access keys are essentially the username and password for your storage account, and should be treated with the same level of security as you would any other sensitive information. To minimize risk, it is recommended to use SAS instead of access keys when possible.

SAS provide granular control over permissions, expiration dates, and access protocol restrictions. This allows you to share specific resources or functionality with external parties without exposing your entire storage account.

Implementation of Role-Based Access Control (RBAC)

Role-based access control (RBAC) allows you to assign specific roles to users or groups based on their responsibilities within your organization. RBAC is a key element in implementing least privilege access control, which means that users only have the necessary permissions required for their job function. This helps prevent unauthorized data breaches and ensures compliance with privacy regulations such as GDPR.

Encryption and SSL/TLS usage

Encryption is essential for securing data at rest and in transit. Azure Storage encrypts data at rest by default using service-managed keys or customer-managed keys stored in Azure Key Vault.

For added security, it is recommended to use SSL/TLS for data transfers over public networks such as the internet. By encrypting data in transit, unauthorized third-parties will not be able to read or modify sensitive information being transmitted between client applications and Azure Storage.

Conclusion: Security Best Practices

Implementing proper security measures such as using access keys/SAS, RBAC, encryption, and SSL/TLS usage can help protect your organization’s valuable assets stored on Azure Storage from unauthorized access and breaches. It’s important to regularly review and audit your security protocols to ensure that they remain effective and up-to-date.

Performance Best Practices

Proper Use of Blob Storage Tiers

When it comes to blob storage, Azure offers three different tiers: hot, cool, and archive. Each tier has a different price point and is optimized for different access patterns. Choosing the right tier for your specific needs can result in significant cost savings.

For example, if you have data that is frequently accessed or modified, the hot tier is the most appropriate option as it provides low latency access to data and is intended for frequent transactions. On the other hand, if you have data that is accessed infrequently or stored primarily for backup/archival purposes, then utilizing the cool or archive tiers may be more cost-effective.

It’s important to note that changing storage tiers can take some time due to data movement requirements. Hence you should carefully evaluate your usage needs before settling on a particular tier.

Utilization of Content Delivery Network (CDN)

CDNs are an effective solution when it comes to delivering content with high performance and low latency across geographical locations. By leveraging a CDN with Azure Storage Account, you can bring your content closer to users by replicating blobs across numerous edge locations across the globe.

This means that when a user requests content from your website or application hosted in Azure Storage using CDN, they will receive that content from their nearest edge location rather than waiting for content delivery from a central server location (in this case – Azure storage). By using CDNs with Azure Storage Account in this way, you can deliver high-performance experiences even during peak traffic times while reducing bandwidth costs.

Optimal Use of Caching

Caching helps improve application performance by storing frequently accessed data closer to end-users without having them make requests directly to server resources (in this case – Azure Storage). This helps reduce latency and bandwidth usage.

Azure offers several caching options, including Azure Redis Cache and Azure Managed Caching. These can be used in conjunction with Azure Storage to improve overall application performance and reduce reliance on expensive server resources.

When utilizing caching with Azure Storage, it’s important to consider the cache size and eviction policies based on your application needs. Also, you need to evaluate the type of data being cached as some data types are better suited for cache than others.

Availability and Resiliency Best Practices

One of the most important considerations for any organization’s data infrastructure is ensuring its availability and resiliency. In scenarios where data is critical to business operations, any form of downtime can result in significant losses. Therefore, it is important to have a plan in place for redundancy and disaster recovery.

Replication options for data redundancy

Azure Storage provides users with multiple replication options to ensure that their data is safe from hardware failures or other disasters. The three primary replication options available are:

- Locally redundant storage (LRS): This option creates multiple copies of your data within a single Azure datacenter.

However, this option does not replicate your data across different regions or geographies, so there’s still a risk of data loss in case of a natural disaster that affects the entire region.

- Zone-redundant storage (ZRS): This option replicates your data synchronously across three availability zones within a single region, increasing fault tolerance.

- Geo-redundant storage (GRS):this option replicates your data asynchronously to another geographic location, providing an additional layer of protection against natural disasters or catastrophic events affecting an entire region.

Implementation of geo-redundancy

The GRS replication option provides a higher level of resiliency as it replicates the user’s storage account to another Azure region without manual intervention required. In the event that the primary region becomes unavailable due to natural disaster or system failure, the secondary copy will be automatically promoted so that clients can continue accessing their information without any interruptions.

Azure Storage offers GRS replication at a nominal cost, making it an attractive option for organizations that want to ensure their data is available to their clients at all times. It is important to note that while the GRS replication option provides additional resiliency, it does not replace the need for proper backups and disaster recovery planning.

Use of Azure Site Recovery for disaster recovery

Azure Site Recovery (ASR) is a cloud-based service that allows you to replicate workloads running on physical or virtual machines from your primary site to a secondary location. ASR is integrated with Azure Storage and can support the replication of your data from one region to another. This means that in case of a complete site failure or disaster, you can use ASR’s failover capabilities to quickly bring up your applications and restore access for your customers.

ASR also provides automated failover testing at no additional cost (up to 31 tests per year), allowing customers to validate their disaster recovery plans regularly. Additionally, Azure Site Recovery supports cross-platform replication, making it an ideal solution for organizations with heterogeneous environments.

Implementing these best practices will help ensure high availability and resiliency for your organization’s data infrastructure. By utilizing Azure Storage’s built-in redundancy options such as GRS and ZRS, as well as implementing Azure Site Recovery as part of your disaster recovery planning process, you can minimize downtime and guarantee continuity even in the face of unexpected events.

Cost Optimization Best Practices

While Azure Storage offers a variety of storage options, choosing the appropriate storage tier based on usage patterns is crucial to keeping costs low. Blob Storage tiers, which include hot, cool, and archive storage, provide different levels of performance and cost. Hot storage is ideal for frequently accessed data that requires low latency and high throughput.

Cool storage is designed for infrequently accessed data that still requires quick access times but with lower cost. Archive storage is perfect for long-term retention of rarely accessed data at the lowest possible price.

Effective utilization of storage capacity is also important for cost optimization. Azure Blob Storage allows users to store up to 5 petabytes (PB) per account, but this can quickly become expensive if not managed properly.

By monitoring usage patterns and setting up automated policies to move unused or infrequently accessed data to cheaper tiers, users can avoid paying for unnecessary storage space. Another key factor in managing costs with Azure Storage is monitoring and optimizing data transfer costs.

As data moves in and out of Azure Storage accounts, transfer fees are incurred based on the amount of data transferred. By implementing strategies such as compression or batching transfers together whenever possible, users can reduce these fees.

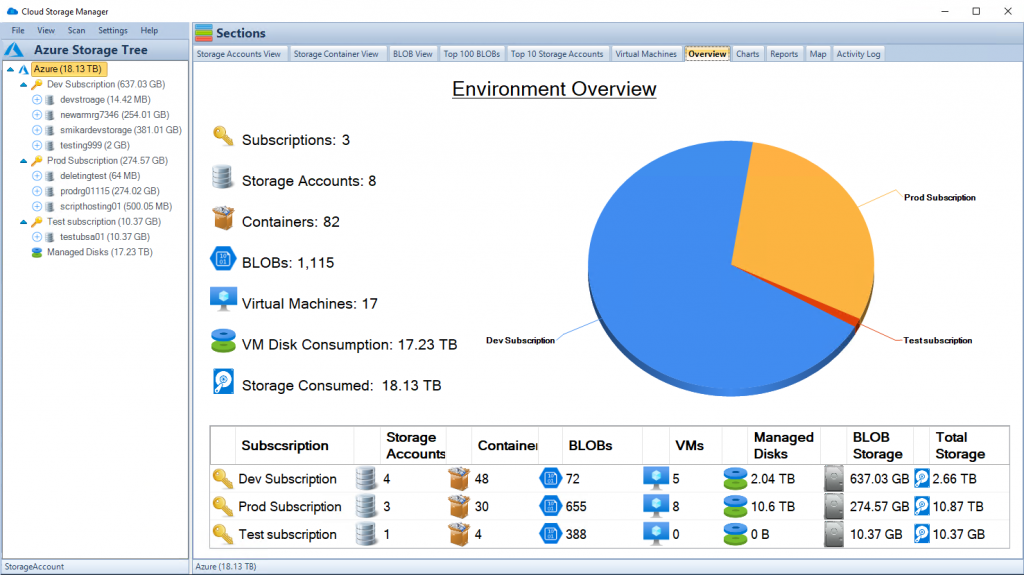

To further enhance cost efficiency and optimization, utilizing an intelligent management tool can make a world of difference. This is where SmiKar Software’s Cloud Storage Manager (CSM) comes in.

CSM is an innovative solution designed to streamline the storage management process. Its primary feature is its ability to analyze data usage patterns and minimise storage costs with analytics and reporting.

Cloud Storage Manager also provides an intuitive, user-friendly dashboard which gives a clear overview of your storage usage, helping you make more informed decisions about your storage needs.

CSM’s intelligent reporting can also identify and highlight opportunities for further savings, such as potential benefits from compressing certain files or batching transfers.

Cloud Storage Manager is an essential tool for anyone looking to make the most out of their Azure storage accounts. It not only simplifies storage management but also helps to significantly reduce costs. Invest in Cloud Storage Manager today, and start experiencing the difference it can make in your cloud storage management.

The Importance of Choosing the Appropriate Storage Tier Based on Usage Patterns

Choosing the appropriate Blob Storage tier based on usage patterns can significantly impact overall costs when using Azure Storage. For example, if a user has frequently accessed but small files that require low latency response times (such as images used in a website), hot storage would be an appropriate choice due to its fast response times but higher cost per GB stored compared to cooler tiers like Cool or Archive.

Cooler tiers are ideal for less frequently accessed files such as backups or archives where retrieval times are not as critical as with hot tier files because the cost per GB stored is lower. Archive tier is perfect for long-term retention of rarely accessed data at a lower price point than Cool storage.

However, access times to Archive storage can take several hours. This makes it unsuitable for frequently accessed files, but ideal for long term backups or archival data that doesn’t need to be accessed often.

Effective Utilization of Storage Capacity

One important aspect of effective utilization of storage capacity is understanding how much data each application requires and how much space it needs to store that data. An application that requires a small amount of storage space should not be given large amounts of space in hot or cool storage tiers as these are more expensive options compared to archive tier which is cheaper but slower. Another way to optimize Azure Storage costs is by setting up automated policies that move unused or infrequently accessed files from hot or cool tiers to archive tiers where retrieval times are slower but the cost per GB stored is significantly less than cooler tiers.

Monitoring and Optimizing Data Transfer Costs

Data transfer fees can quickly add up when using Azure Storage, especially if there are large volumes of traffic. To minimize these fees, users should consider compressing their data before transfer as well as batching transfers together whenever possible.

Compressing will reduce overall file size which will reduce the amount charged per transfer while batching transfers allows users to combine multiple transfers into one larger transfer thus avoiding individual charges on each single transfer operation. Additionally, monitoring usage patterns and implementing strategies such as throttling connections during peak usage periods can also help manage costs associated with data transfer fees when using Azure Storage.

Cost optimization best practices for Azure Storage consist of choosing the appropriate Blob Storage tier based on usage patterns, effective utilization of storage capacity through automated policies and proper monitoring strategies for optimizing data transfer costs. By adopting these best practices, users can reduce their overall expenses while still enjoying the full benefits of Azure Storage.

Data Management Best Practices

Implementing retention policies for compliance purposes

Implementing retention policies is an important aspect of data management. Retention policies ensure that data is kept for the appropriate amount of time and disposed of when no longer needed.

This can help organizations comply with various industry regulations such as HIPAA, GDPR, and SOX. Microsoft Azure provides retention policies to manage this process effectively.

Retention policies can be set based on various criteria such as content type, keywords in the file name or metadata, or even by department or user. Once a policy has been created, it can be automatically applied to new data as it is created or retroactively applied to existing data.

In order to ensure compliance, it is important to regularly review retention policies and make adjustments as necessary. This will help avoid any legal repercussions that could arise from failure to comply with industry regulations.

Use of metadata to organize and search data effectively

Metadata is descriptive information about a file that helps identify its properties and characteristics. Metadata includes information such as date created, author name, file size, document type and more.

It enables easy searching and filtering of files using relevant criteria. By utilizing metadata effectively in Azure Storage accounts, you can easily organize your files into categories such as client names or project types which makes it easier for you to find the right files when you need them quickly.

Additionally, metadata tags can be used in search queries so you can quickly find all files with a specific tag across your organization’s entire file system regardless of its location within Azure Storage accounts. The use of metadata also ensures consistent naming conventions which makes searching through old documents easier while making sure everyone on the team understands the meaning behind each piece of content stored in the cloud.

Efficiently managing large-scale data transfers

With Azure Blob Storage account comes an improved scalability which is capable of handling large-scale data transfers with ease. However, managing such data transfers isn’t always easy and requires proper planning and management. Azure offers effective data transfer options such as Azure Data Factory that can help you manage large scale data transfers.

This service helps in scheduling and orchestrating the transfer of large amounts of data from one location to another. Furthermore, Azure Storage accounts provide an efficient way to move large amounts of data into or out of the cloud using a few different methods including AzCopy or the Azure Import/Export service.

AzCopy is a command-line tool that can be used to upload and download data to and from Blob Storage while the Azure Import/Export service allows you to ship hard drives containing your data directly to Microsoft for import/export. Effective management and handling of large-scale file transfers ensures that your organization’s critical information is securely moved around without any loss or corruption.

Conclusion

Recap on the importance of implementing Azure Storage best practices

Implementing Azure Storage best practices is critical to ensure optimal performance, security, availability, and cost-effectiveness. By utilizing access keys and SAS, implementing RBAC, and utilizing encryption and SSL/TLS usage for security purposes; proper use of Blob Storage tiers, CDN utilization, and caching for performance optimization; replication options for data redundancy, geo-redundancy implementation, and disaster recovery measures through Azure Site Recovery for availability and resiliency; appropriate storage tier selection based on usage patterns, effective utilization of storage capacity, monitoring data transfer costs for cost optimization; retention policies implementation for compliance purposes; using metadata to organize data effectively; efficiently managing large-scale data transfers – all these measures can help enterprises to achieve their business goals more efficiently.

Encouragement to continuously review and optimize storage strategies

However, it’s essential not just to implement these best practices but also continuously review them. As technology advances rapidly over time with new features being added frequently by cloud providers like Microsoft Azure – there may be better ways or new tools available that companies can leverage to optimize their storage strategies further. By continually reviewing the efficiency of your existing storage strategy against your evolving business needs – you’ll be able to identify gaps or areas that require improvements sooner rather than later.

Therefore it’s always wise to keep a lookout for industry trends related to cloud computing or specifically in this case – Microsoft Azure Storage best practices. Industry reports from reputable research firms like Gartner or IDC can provide you with insights into current trends around cloud-based infrastructure services.

The discussion forums within the Microsoft community where professionals discuss their experiences with Azure services can also give you an idea about what others are doing. – implementing Azure Storage best practices should be a top priority for businesses looking forward to leveraging modern-day cloud infrastructure services.

By adopting these practices and continuously reviewing and optimizing them, enterprises can achieve optimal performance, security, availability, cost-effectiveness while ensuring compliance with industry regulations. The benefits of implementing Azure Storage best practices far outweigh the costs of not doing so.